This overview will cover the basic tarball setup for your Mac. If you’re an engineer building applications on CDH and becoming familiar with all the rich features for designing the next big solution, it becomes essential to have a native Mac OSX install. Sure, you may argue that your MBP with its four-core, hyper-threaded i7, SSD, 16GB of DDR3 memory are sufficient for spinning up a VM, and in most instances — such as using a VM for a quick demo — you’re right. However, when experimenting with a slightly heavier workload that is a bit more resource intensive, you’ll want to explore a native install.

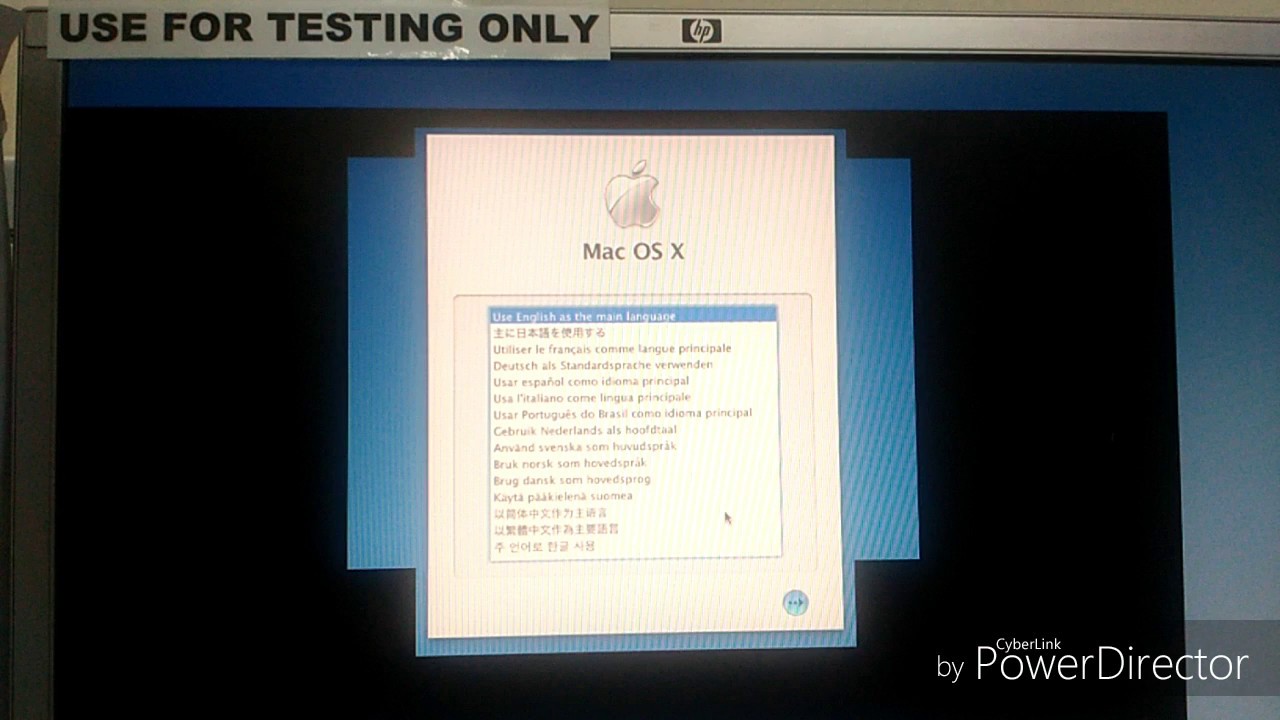

Question: Q: Mac OS X 10.4.4 Install CD Won't Read Startup Disk Hi, when i was installing Windows XP, i accidentally erased my Mac startup disc, and when i try to reinstall Mac OS X, this message comes up 'Alert This software cannot be installed on this computer' and it gives me two options to restart or to change the start-up disk.

In this post, I will cover setup of a few basic dependencies and the necessities to run HDFS, MapReduce with YARN, Apache ZooKeeper, and Apache HBase. It should be used as a guideline to get your local CDH box setup with the objective to enable you with building and running applications on the Apache Hadoop stack. Note: This process is not supported and thus you should be comfortable as a self-supporting sysadmin. With that in mind, the configurations throughout this guideline are suggested for your default bash shell environment that can be set in your /.profile. Dependencies Install the Java version that is supported for the CDH version you are installing.

In my case for. Historically the JDK for Mac OSX was only available from Apple, but since JDK 1.7, it’s available directly through Oracle’s Java downloads. Download the.dmg (in the example below, jdk-7u67-macosx-x64.dmg) and install it. Verify and configure the installation: Old Java path: /System/Library/Frameworks/JavaVM.framework/Home New Java path: /Library/Java/JavaVirtualMachines/jdk1.7.067.jdk/Contents/Home export JAVAHOME='/Library/Java/JavaVirtualMachines/jdk1.7.067.jdk/Contents/Home' Note: You’ll notice that after installing the Oracle JDK, the original path used to manage versioning /System/Library/Frameworks/JavaVM.framework/Versions, will not be updated and you now have the control to manage your versions independently. Enable ssh on your mac by turning on remote login. You can find this option under your toolbar’s Apple icon System Preferences Sharing. Check the box for Remote Login to enable the service.

Allow access for: “Only these users: Administrators” Note: In this same window, you can modify your computer’s hostname. Enable password-less ssh login to localhost for MRv1 and HBase. Open your terminal. Generate an rsa or dsa key.

ssh-keygen -t rsa -P '. Continue through the key generator prompts (use default options). Test: ssh localhost Homebrew Another toolkit I admire is, a package manager for OSX. While Xcode developer command-line tools are great, the savvy naming conventions and ease of use of Homebrew get the job done in a fun way.

I haven’t needed Homebrew for much else than for installing dependencies required for building native Snappy libraries for Mac OSX and ease of install of MySQL for Hive. Is commonly used within HBase, HDFS, and MapReduce for compression and decompression. CDH Finally, the easy part: The CDH tarballs are very nicely packaged and easily from Cloudera’s repository.

I’ve downloaded tarballs for CDH 5.1.0. Download and explode the tarballs in a lib directory where you can manage latest versions with a simple symlink as the following. Although Mac OSX’s “Make Alias” feature is bi-directional, do not use it, but instead use your command-line ln -s command, such as ln -s sourcefile targetfile. /Users/jordanh/cloudera/.

cdh5.1/. hadoop - /Users/jordanh/cloudera/lib/hadoop-2.3.0-cdh5.1.0. hbase - /Users/jordanh/cloudera/lib/hbase-0.98.1-cdh5.1.0.

hive - /Users/jordanh/cloudera/lib/hive-0.12.0-cdh5.1.0. zookeeper - /Users/jordanh/cloudera/lib/zookeeper-3.4.5-cdh4.7.0. ops/. dn. logs/hadoop, logs/hbase, logs/yarn.

nn/. pids. tmp/. zk/ You’ll notice above that you’ve created a handful of directories under a folder named ops.

You’ll use them later to customize the configuration of the essential components for running Hadoop. Set your environment properties according to the paths where you’ve exploded your tarballs. Dfs.namenode.name.dir /Users/jordanh/cloudera/ops/nn Determines where on the local filesystem the DFS name node should store the name table(fsimage). If this is a comma-delimited list of directories then the name table is replicated in all of the directories, for redundancy.

Dfs.datanode.data.dir /Users/jordanh/cloudera/ops/dn/ Determines where on the local filesystem an DFS data node should store its blocks. If this is a comma-delimited list of directories, then data will be stored in all named directories, typically on different devices. Directories that do not exist are ignored. Dfs.datanode.http.address localhost:50075 The datanode http server address and port.

If the port is 0 then the server will start on a free port. Dfs.replication 1 Default block replication. The actual number of replications can be specified when the file is created. The default is used if replication is not specified in create time. I attribute the YARN and MRv2 configuration and setup from the. I will not digress into the specifications of each property or the orchestration and details of how YARN and MRv2 operate, but there’s some great information that my colleague Sandy has already shared for. Be sure to make the necessary adjustments per your system’s memory and CPU constraints.

Per the image below, it is easy to see how these parameters will affect your machine’s performance when you execute jobs. Next, edit the following files as shown. Yarn.nodemanager.aux-services mapreduceshuffle the valid service name should only contain a-zA-Z0-9 and can not start with numbers yarn.log-aggregation-enable true Whether to enable log aggregation yarn.nodemanager.remote-app-log-dir hdfs://localhost:8020/tmp/yarn-logs Where to aggregate logs to. Yarn.nodemanager.resource.memory-mb 8192 Amount of physical memory, in MB, that can be allocated for containers. Yarn.nodemanager.resource.cpu-vcores 4 Number of CPU cores that can be allocated for containers. Yarn.scheduler.minimum-allocation-mb 1024 The minimum allocation for every container request at the RM, in MBs.

Memory requests lower than this won't take effect, and the specified value will get allocated at minimum. Yarn.scheduler.maximum-allocation-mb 2048 The maximum allocation for every container request at the RM, in MBs.

Memory requests higher than this won't take effect, and will get capped to this value. Yarn.scheduler.minimum-allocation-vcores 1 The minimum allocation for every container request at the RM, in terms of virtual CPU cores. Requests lower than this won't take effect, and the specified value will get allocated the minimum. Yarn.scheduler.maximum-allocation-vcores 2 The maximum allocation for every container request at the RM, in terms of virtual CPU cores.

Requests higher than this won't take effect, and will get capped to this value. Mapreduce.jobtracker.address localhost:8021 mapreduce.jobhistory.done-dir /tmp/job-history/ mapreduce.framework.name yarn The runtime framework for executing MapReduce jobs. Can be one of local, classic or yarn. Mapreduce.map.cpu.vcores 1 The number of virtual cores required for each map task. Mapreduce.reduce.cpu.vcores 1 The number of virtual cores required for each reduce task. Mapreduce.map.memory.mb 1024 Larger resource limit for maps.

Mapreduce.reduce.memory.mb 1024 Larger resource limit for reduces. Mapreduce.map.java.opts -Xmx768m Heap-size for child jvms of maps. Mapreduce.reduce.java.opts -Xmx768m Heap-size for child jvms of reduces. Yarn.app.mapreduce.am.resource.mb 1024 The amount of memory the MR AppMaster needs.

I hope that some kind soul can help me. I own an iMac G5 1.6 non-Intel machine running OSX 10.3.9 I wanted to upgrade to Tiger so I bought a set of iMac G5 non-Intel OSX 10.4 install discs off Ebay thinking that they would run. The problem is that when I load the first disc it won't let me install anything, shows me an alert message saying ‘this software cannot be installed on this computer’ and asks me to restart or start from the install disc. Then I get the same problem over again.

What could the problem be? Can anyone help me please? Many thanks in advance. posted via. please report abuse to. I hope that some kind soul can help me.

I own an iMac G5 1.6 non-Intel machine running OSX 10.3.9 I wanted to upgrade to Tiger so I bought a set of iMac G5 non-Intel OSX 10.4 install discs off Ebay thinking that they would run. The problem is that when I load the first disc it won't let me install anything, shows me an alert message saying Othis software cannot be installed on this computer' and asks me to restart or start from the install disc. Then I get the same problem over again. What could the problem be? Can anyone help me please?

Many thanks in advance. Click to expand.Whether or not it will work is a bit of a crap shoot.

In the OP's case it apparently won't and he'll need to get a retail Tiger install package. In my case, my eMac shipped with 10.3. I obtained an OEM 10.4 eMac install disc that shipped with a model of eMac similar but not identical to mine. It worked on my eMac without any problem. I was also able to successfully install OS X 10.1 on a G3 iBook. This install disc shipped with a 1st generation G4 eMac.

When buying second hand OEM install discs it's best to ensure you have identical hardware to that with which the disc shipped, or ensure the seller has a generous return policy if your hardware doesn't exactly match and you can't get it to work.